A Laser-based AEB System: How Will It Change the Game?

Author | Zhu Shiyun

Editor | Qiu Kaijun

“Now, with our intelligent driving AD Max car, even when driving at speeds below 70km/h without turning on the headlights, the AEB (Automatic Emergency Braking) system will still slow down or even stop the car when approaching a stationary vehicle,” emphasized Yang Jie, Ideal Auto’s Active Safety Product Manager to EV Observer. “Of course, we do not encourage car owners to test this feature actively.”

In December 2022, ideal Auto released OTA version 4.2, which officially incorporated the lidar into its self-developed AEB system for perception input, and participated in braking decisions. Prior to this, the mainstream perception solution for AEB was visual detection combined with millimeter-wave radar, based on camera pixels and monocular as well as binocular segmentation.

AEB, represented by its level of advanced active safety systems, marks the safety boundary of high-level intelligent driving functions.

To what extent could the addition of lidar expand AEB’s capabilities? Is there still a division between advanced intelligent driving and active safety? Why are Chinese automakers invading AEB, which is the inherent territory of international T1 (level one automotive component supplier)?

30m-50m-80m

The richness of sensors has always been the core driving force for improving AEB performance.

In 2008, Volvo introduced its first AEB system, which used a millimeter-wave radar as the primary sensor.

In the early days, the radar had fewer detection points, and often missed small obstacles such as pedestrians. The characteristic of filtering out static clutter in the radar signal processing also made it difficult to identify static and slightly moving targets.

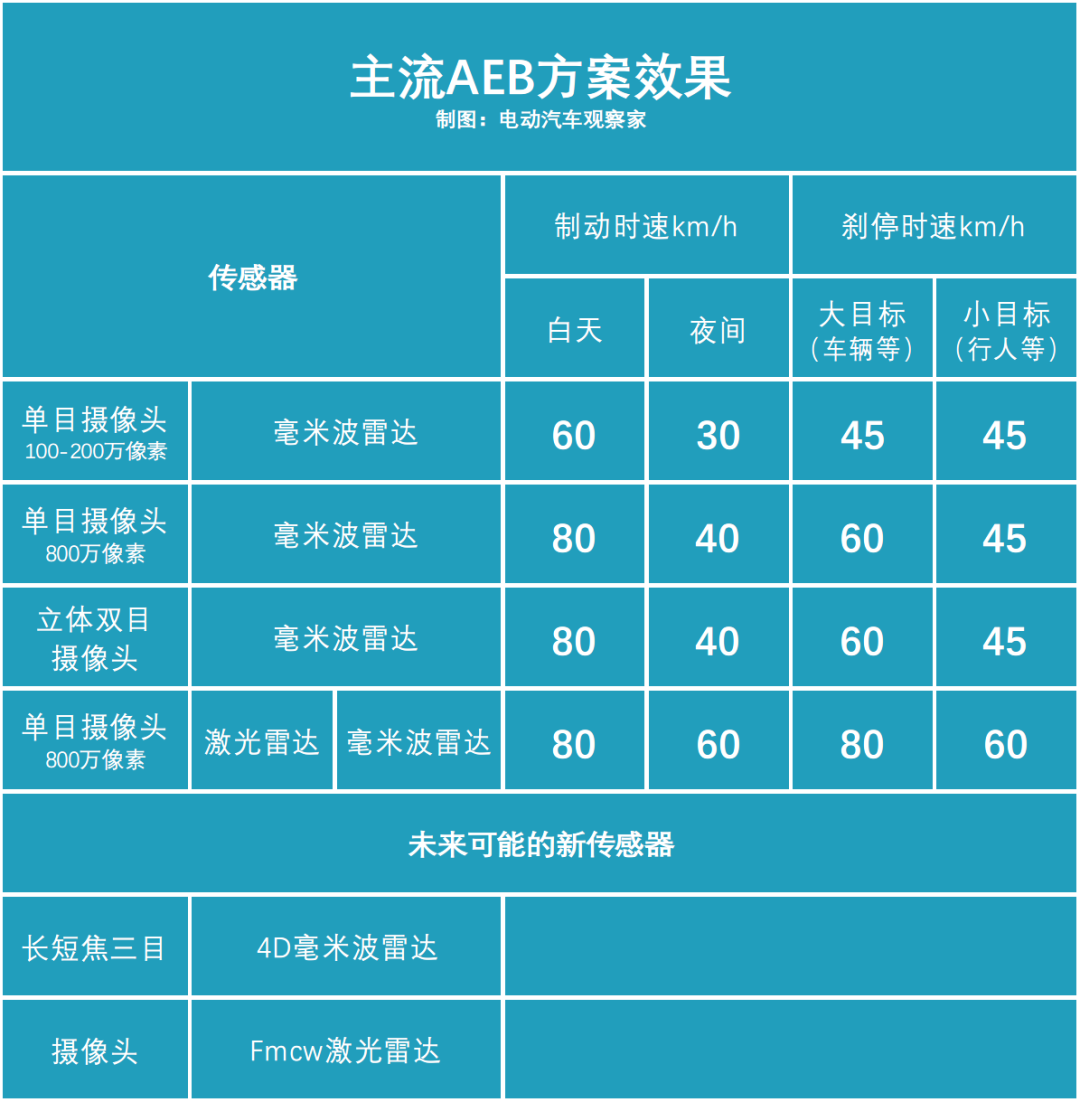

As a result, AEB systems based on millimeter-wave radar as the core sensor could only be triggered at speeds up to 30 km/h, and often could not react to small and stationary targets to avoid accidental triggering.

Around 2015, perception sensors represented by cameras were increasingly introduced into ADAS systems.

By combining a monocular camera with a millimeter-wave radar, the upper limit of AEB triggering speed was raised to 60 km/h, and has become the mainstream solution in the industry.“`

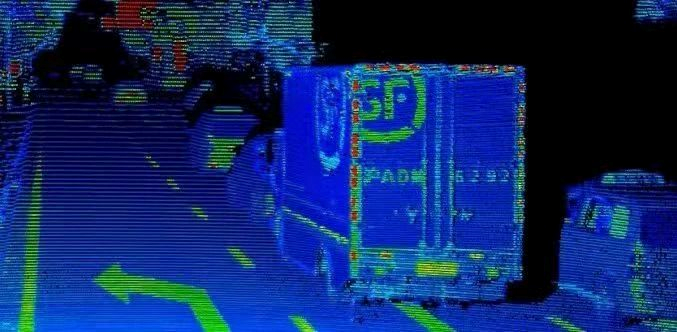

In 2022, the production of LiDAR provides the hardware foundation for expanding the application scenarios of AEB.

Compared with the visual+millimeter wave radar solution, LiDAR can directly provide accurate speed and distance information, and can also classify large and small targets to improve perception accuracy. Therefore, AEB can trigger functions at more precise distance points, reduce false triggering, and make greater use of the vehicle’s braking limit.

Currently, the vision+LiDAR+millimeter wave radar solution of the Ideal AD Max has increased the maximum stopping speed of AEB to 80km/h, which can cover most urban driving scenarios.

In addition, the Subaru’s solution of stereo cameras + millimeter wave radar is also an important member of the AEB “family”.

The stereo camera can calculate 3D information through geometric algorithms and can also apply machine learning, so its upper limit is very high and it is considered a technology route that is expected to replace LiDAR.

Currently, the cost of a stereo camera is below 1000 yuan, which has a strong cost advantage compared to LiDAR, which costs more than 5000 yuan per piece. However, due to the high requirements for symmetry in imaging, components, and layout, the engineering difficulty of the stereo camera is high. The braking speed for night and for large and small targets is still slightly lower than that of the LiDAR solution.

After covering urban working conditions, the next important direction of AEB is to further increase the upper limit of the trigger to 120km/h, and the vehicle speed can be reduced from 120km/h to 60 or even 40km/h in 2-3 seconds in emergency situations, so as to provide drivers with more avoidance time and space to avoid accidents or reduce their severity.

How does AEB work with LiDAR?

Both use visual+LiDAR+millimeter wave radar. Do AEB and ADAS features on a car still need to be divided into “mine and yours?” Yang Jie said,“Using the Ideal AEB system as an example, the functional requirements for perception software are still different from those for ADAS features.”

From the task goals, intelligent driving functions are activated by the driver and work under human supervision. They need to “see” far, at least 150-200 meters away at 120 km/h, and classify targets in terms of priority and attributes, and form spatial and temporal continuity.

However, the AEB is a hard real-time system that starts when the vehicle is powered on; it must meet the task deadline requirements, or unpredictable results may occur. It requires the ability to perceive and react quickly, considering obstacles that may pose a collision risk within 80 meters, and to some extent, referencing semantic information such as lane lines to judge “special obstacles” such as sharp curves.

Ideal AEB system field test: disappearing car ahead -

<video controls class="w-full" preload="metadata" poster="https://42how-com.oss-cn-beijing.aliyuncs.com/article/image_20230214162650.png">

<source src="https://upload.42how.com/temp/video_1676363261975.mp4">

</video>

**In terms of confidence, when the daylight is good, the AEB system relies on vision, and laser radar and millimeter-wave radar participate in perception fusion; when the light is poor at night, laser radar is used primarily, and other perceivers perform fusion verification.**

**At the perception algorithm level, the ideal AEB and ADAS features use the same neural network perception model, but in the post-processing stage, targeted optimization is performed according to different functional requirements.**

However, the decision-making algorithm part is done by two different teams for ADAS and AEB.

The scenario faced by the AEB is to make a decision 2-3 seconds before an accident occurs, to determine whether to apply the brake or request full braking, rather than the game scenario faced by driving.

Yang Jie stated that **in such an extreme time and clear task background, there would be no essential difference between the effects of the neural network and logical judgment algorithms; therefore, AEB uses logical judgment algorithms in the decision-making layer.**

**On the hardware level**, the ideal AEB and ADAS features are both part of the intelligent driving domain, but AEB is mainly deployed on MCU (micro-control units) with high reliability, real-time, and safety to ensure the stability and real-time of its operation and provide drivers with effective safety protection.## Battle of Iteration

In addition to MCU, the Orin model of Ideal AD Max also runs a set of AEB system, including the shadow software of perception, fusion, and decision-making modules, which is only "truncated" the connection between the system and the actuator, as the "shadow" of the system on MCU.

**"Shadow mode" is one of the core foundations of Ideal's self-developed AEB, and has become the basis for Ideal and other emerging forces to compete with international T1 in the AEB field.**

AEB originated in Europe, and is an advantageous battlefield for T1 such as Bosch, Continental, and Autoliv. With millions of sales and global market distribution, international T1 has mastered the core barrier of its technology by mastering emergency braking scenario data of billions of levels.

But precisely because of the high data barrier and the use of a product adaptation strategy that is suitable for the world, international T1 often responds insufficiently to the changing and faster Chinese market demand.

**"When we first launched the assisted driving system, there were some situations where AEB was misoperated, and the AEB braking performance in accidents was not as expected. From the perspective of user safety, we hope to iterate and optimize as soon as possible, but we found it difficult to communicate with suppliers, the cycle was very long, and in the end, we could only give up."**

**"Continuous optimization of driving service functions is to hope that more users will use them. But if the safety capability cannot keep up, the probability of accident risk will rise with the increase in usage rate and mileage. Therefore, while doing a good job in assisted driving functions, we must also add active safety."** Yang Jie explained the core logic of Ideal's self-developed AEB.

Ideal AEB field test: Children's "Ghost Probe Head"————

<video controls class="w-full" preload="metadata" poster="https://42how-com.oss-cn-beijing.aliyuncs.com/article/image_20230214162935.png">

<source src="https://upload.42how.com/temp/video_1676363400403.mp4">

</video>

The selling point of intelligent driving systems is data, algorithm, and computing power, while active safety depends on iteration speed.

“Every new AEB feature scenario, from software development and testing to final deployment, is a brand new iteration process for AEB.“

Developing new AEB adaptive feature scenarios in the test field is relatively easy from a technical standpoint. However, it is difficult to produce the “new abilities” in the real environment and traffic conditions, while minimizing false positives, and verifying it thoroughly, which requires millions or even tens of millions of miles of driving.

“At present, the iteration speed of supplier’s packaging solutions is usually in quarters, but our self-developed AEB can achieve week-level iteration,” said Yang Jie.

International top T1 based on data obtained from millions of mass-produced cars worldwide, while Idealsee catches up through shadow mode.

Unlike the advanced driving assistance system that requires the driver’s active activation, AEB is an on-power startup working status, which is inherently suitable for shadow mode. The AEB system running on Orin is compared with the driver’s operation in real time, and the scenario data that judged differently is desensitized and labeled, recorded, and becomes a valuable iteration database.

The verification part is left to the closed-loop simulation.

Yang Jie said, “Idealsee AD’s perception, fusion, and regulation modules have achieved iterative decoupling, which can both verify individual modules and verify the entire full-chain version in the simulation system.”

In addition, each model of Idealsee AD will have an independent road test team, carrying the latest system version for long-term real road tests, before being pushed to the user.

Someone has succeeded on this road.

Volvo began promoting the AES (automated emergency steering) function in 2016, which allows the vehicle to steer itself to avoid an emergency. However, even now, it still requires the driver to provide clear steering input via the steering wheel to respond.

Later, Tesla achieved an automatic response to the danger by identifying the danger and automatically steering without driver input through high-speed iterations in 2018.

“`On the basis of “shadow mode + data closed loop”, the Ideal Autonomous Safety team only took two quarters to “incorporate” the lidar: the lidar, which was previously only seen on EID interface for perception results, has now become a perception input source and participated in the AEB function’s braking decision-making.

Now not only the Ideal car, XPeng and NIO have also included self-developed AEB in their work process. After Li Xiang announced the open source AEB system last year, Ideal has also formed a working group internally to adjust the architecture of the AEB system to make it a more platform-based software system.

With the improved AEB capability as the safety valve for intelligent driving, the intelligent driving becomes a payable function with a solid foundation.

This article is a translation by ChatGPT of a Chinese report from 42HOW. If you have any questions about it, please email bd@42how.com.